|

Posted by Purple-Toolz I’m a self-funded start-up business owner. As such, I want to get as much as I can for free before convincing our finance director to spend our hard-earned bootstrapping funds. I’m also an analyst with a background in data and computer science, so a bit of a geek by any definition. What I try to do, with my SEO analyst hat on, is hunt down great sources of free data and wrangle it into something insightful. Why? Because there’s no value in basing client advice on conjecture. It’s far better to combine quality data with good analysis and help our clients better understand what’s important for them to focus on. In this article, I will tell you how to get started using a few free resources and illustrate how to pull together unique analytics that provide useful insights for your blog articles if you’re a writer, your agency if you’re an SEO, or your website if you’re a client or owner doing SEO yourself. The scenario I’m going to use is that I want analyze some SEO attributes (e.g. backlinks, Page Authority etc.) and look at their effect on Google ranking. I want to answer questions like “Do backlinks really matter in getting to Page 1 of SERPs?” and “What kind of Page Authority score do I really need to be in the top 10 results?” To do this, I will need to combine data from a number of Google searches with data on each result that has the SEO attributes in that I want to measure. Let’s get started and work through how to combine the following tasks to achieve this, which can all be setup for free:

Querying with Google Custom Search EngineWe first need to query Google and get some results stored. To stay on the right side of Google’s terms of service, we’ll not be scraping Google.com directly but will instead use Google’s Custom Search feature. Google’s Custom Search is designed mainly to let website owners provide a Google like search widget on their website. However, there is also a REST based Google Search API that is free and lets you query Google and retrieve results in the popular JSON format. There are quota limits but these can be configured and extended to provide a good sample of data to work with. When configured correctly to search the entire web, you can send queries to your Custom Search Engine, in our case using PHP, and treat them like Google responses, albeit with some caveats. The main limitations of using a Custom Search Engine are: (i) it doesn’t use some Google Web Search features such as personalized results and; (ii) it may have a subset of results from the Google index if you include more than ten sites. Notwithstanding these limitations, there are many search options that can be passed to the Custom Search Engine to proxy what you might expect Google.com to return. In our scenario, we passed the following when making a call: https://www.googleapis.com/customsearch/v1?key=<google_api_id>&userIp= <ip_address>&cx<custom_search_engine_id>&q=iPhone+X&cr=countryUS&start= 1</custom_search_engine_id></ip_address></google_api_id> Where:

Google has said that the Google Custom Search engine differs from Google .com, but in my limited prod testing comparing results between the two, I was encouraged by the similarities and so continued with the analysis. That said, keep in mind that the data and results below come from Google Custom Search (using ‘whole web’ queries), not Google.com. Using the free Moz API accountMoz provide an Application Programming Interface (API). To use it you will need to register for a Mozscape API key, which is free but limited to 2,500 rows per month and one query every ten seconds. Current paid plans give you increased quotas and start at $250/month. Having a free account and API key, you can then query the Links API and analyze the following metrics:

NOTE: Since this analysis was captured, Moz documented that they have deprecated these fields. However, in testing this (15-06-2019), the fields were still present. Moz API Codes are added together before calling the Links API with something that looks like the following: www.apple.com%2F?Cols=103616137253&AccessID=MOZ_ACCESS_ID& Expires=1560586149&Signature=<MOZ_SECRET_KEY> Where:

Moz will return with something like the following JSON:

Array

(

[ut] => Apple

[uu] => <a href="http://www.apple.com/" class="redactor-autoparser-object">www.apple.com/</a>

[ueid] => 13078035

[uid] => 14632963

[uu] => www.apple.com/

[ueid] => 13078035

[uid] => 14632963

[umrp] => 9

[umrr] => 0.8999999762

[fmrp] => 2.602215052

[fmrr] => 0.2602215111

[us] => 200

[upa] => 90

[pda] => 100

)

For a great starting point on querying Moz with PHP, Perl, Python, Ruby and Javascript, see this repository on Github. I chose to use PHP. Harvesting data with PHP and MySQLNow we have a Google Custom Search Engine and our Moz API, we’re almost ready to capture data. Google and Moz respond to requests via the JSON format and so can be queried by many popular programming languages. In addition to my chosen language, PHP, I wrote the results of both Google and Moz to a database and chose MySQL Community Edition for this. Other databases could be also used, e.g. Postgres, Oracle, Microsoft SQL Server etc. Doing so enables persistence of the data and ad-hoc analysis using SQL (Structured Query Language) as well as other languages (like R, which I will go over later). After creating database tables to hold the Google search results (with fields for rank, URL etc.) and a table to hold Moz data fields (ueid, upa, uda etc.), we’re ready to design our data harvesting plan. Google provide a generous quota with the Custom Search Engine (up to 100M queries per day with the same Google developer console key) but the Moz free API is limited to 2,500. Though for Moz, paid for options provide between 120k and 40M rows per month depending on plans and range in cost from $250–$10,000/month. Therefore, as I’m just exploring the free option, I designed my code to harvest 125 Google queries over 2 pages of SERPs (10 results per page) allowing me to stay within the Moz 2,500 row quota. As for which searches to fire at Google, there are numerous resources to use from. I chose to use Mondovo as they provide numerous lists by category and up to 500 words per list which is ample for the experiment. I also rolled in a few PHP helper classes alongside my own code for database I/O and HTTP. In summary, the main PHP building blocks and sources used were:

One factor to be aware of is the 10 second interval between Moz API calls. This is to prevent Moz being overloaded by free API users. To handle this in software, I wrote a "query throttler" which blocked access to the Moz API between successive calls within a timeframe. However, whilst working perfectly it meant that calling Moz 2,500 times in succession took just under 7 hours to complete. Analyzing data with SQL and RData harvested. Now the fun begins! It’s time to have a look at what we’ve got. This is sometimes called data wrangling. I use a free statistical programming language called R along with a development environment (editor) called R Studio. There are other languages such as Stata and more graphical data science tools like Tableau, but these cost and the finance director at Purple Toolz isn’t someone to cross! I have been using R for a number of years because it’s open source and it has many third-party libraries, making it extremely versatile and appropriate for this kind of work. Let’s roll up our sleeves. I now have a couple of database tables with the results of my 125 search term queries across 2 pages of SERPS (i.e. 20 ranked URLs per search term). Two database tables hold the Google results and another table holds the Moz data results. To access these, we’ll need to do a database INNER JOIN which we can easily accomplish by using the RMySQL package with R. This is loaded by typing "install.packages('RMySQL')" into R’s console and including the line "library(RMySQL)" at the top of our R script. We can then do the following to connect and get the data into an R data frame variable called "theResults."

library(RMySQL)

# INNER JOIN the two tables

theQuery <- "

SELECT A.*, B.*, C.*

FROM

(

SELECT

cseq_search_id

FROM cse_query

) A -- Custom Search Query

INNER JOIN

(

SELECT

cser_cseq_id,

cser_rank,

cser_url

FROM cse_results

) B -- Custom Search Results

ON A.cseq_search_id = B.cser_cseq_id

INNER JOIN

(

SELECT *

FROM moz

) C -- Moz Data Fields

ON B.cser_url = C.moz_url

;

"

# [1] Connect to the database

# Replace USER_NAME with your database username

# Replace PASSWORD with your database password

# Replace MY_DB with your database name

theConn <- dbConnect(dbDriver("MySQL"), user = "USER_NAME", password = "PASSWORD", dbname = "MY_DB")

# [2] Query the database and hold the results

theResults <- dbGetQuery(theConn, theQuery)

# [3] Disconnect from the database

dbDisconnect(theConn)

NOTE: I have two tables to hold the Google Custom Search Engine data. One holds data on the Google query (cse_query) and one holds results (cse_results). We can now use R’s full range of statistical functions to begin wrangling. Let’s start with some summaries to get a feel for the data. The process I go through is basically the same for each of the fields, so let’s illustrate and use Moz’s ‘UEID’ field (the number of external equity links to a URL). By typing the following into R I get the this:

> summary(theResults$moz_ueid)

Min. 1st Qu. Median Mean 3rd Qu. Max.

0 1 20 14709 182 2755274

> quantile(theResults$moz_ueid, probs = c(1, 5, 10, 25, 50, 75, 80, 90, 95, 99, 100)/100)

1% 5% 10% 25% 50% 75% 80% 90% 95% 99% 100%

0.0 0.0 0.0 1.0 20.0 182.0 337.2 1715.2 7873.4 412283.4 2755274.0

Looking at this, you can see that the data is skewed (a lot) by the relationship of the median to the mean, which is being pulled by values in the upper quartile range (values beyond 75% of the observations). We can however, plot this as a box and whisker plot in R where each X value is the distribution of UEIDs by rank from Google Custom Search position 1-20. Note we are using a log scale on the y-axis so that we can display the full range of values as they vary a lot!

Box and whisker plots are great as they show a lot of information in them (see the geom_boxplot function in R). The purple boxed area represents the Inter-Quartile Range (IQR) which are the values between 25% and 75% of observations. The horizontal line in each ‘box’ represents the median value (the one in the middle when ordered), whilst the lines extending from the box (called the ‘whiskers’) represent 1.5x IQR. Dots outside the whiskers are called ‘outliers’ and show where the extents of each rank’s set of observations are. Despite the log scale, we can see a noticeable pull-up from rank #10 to rank #1 in median values, indicating that the number of equity links might be a Google ranking factor. Let’s explore this further with density plots. Density plots are a lot like distributions (histograms) but show smooth lines rather than bars for the data. Much like a histogram, a density plot’s peak shows where the data values are concentrated and can help when comparing two distributions. In the density plot below, I have split the data into two categories: (i) results that appeared on Page 1 of SERPs ranked 1-10 are in pink and; (ii) results that appeared on SERP Page 2 are in blue. I have also plotted the medians of both distributions to help illustrate the difference in results between Page 1 and Page 2.

The inference from these two density plots is that Page 1 SERP results had more external equity backlinks (UEIDs) on than Page 2 results. You can also see the median values for these two categories below which clearly shows how the value for Page 1 (38) is far greater than Page 2 (11). So we now have some numbers to base our SEO strategy for backlinks on.

# Create a factor in R according to which SERP page a result (cser_rank) is on

> theResults$rankBin <- paste("Page", ceiling(theResults$cser_rank / 10))

> theResults$rankBin <- factor(theResults$rankBin)

# Now report the medians by SERP page by calling ‘tapply’

> tapply(theResults$moz_ueid, theResults$rankBin, median)

Page 1 Page 2

38 11

From this, we can deduce that equity backlinks (UEID) matter and if I were advising a client based on this data, I would say they should be looking to get over 38 equity-based backlinks to help them get to Page 1 of SERPs. Of course, this is a limited sample and more research, a bigger sample and other ranking factors would need to be considered, but you get the idea. Now let’s investigate another metric that has less of a range on it than UEID and look at Moz’s UPA measure, which is the likelihood that a page will rank well in search engine results. > summary(theResults$moz_upa) Min. 1st Qu. Median Mean 3rd Qu. Max. 1.00 33.00 41.00 41.22 50.00 81.00 > quantile(theResults$moz_upa, probs = c(1, 5, 10, 25, 50, 75, 80, 90, 95, 99, 100)/100) 1% 5% 10% 25% 50% 75% 80% 90% 95% 99% 100% 12 20 25 33 41 50 53 58 62 75 81 UPA is a number given to a URL and ranges between 0–100. The data is better behaved than the previous UEID unbounded variable having its mean and median close together making for a more ‘normal’ distribution as we can see below by plotting a histogram in R.

We’ll do the same Page 1 : Page 2 split and density plot that we did before and look at the UPA score distributions when we divide the UPA data into two groups.

# Report the medians by SERP page by calling ‘tapply’

> tapply(theResults$moz_upa, theResults$rankBin, median)

Page 1 Page 2

43 39

In summary, two very different distributions from two Moz API variables. But both showed differences in their scores between SERP pages and provide you with tangible values (medians) to work with and ultimately advise clients on or apply to your own SEO. Of course, this is just a small sample and shouldn’t be taken literally. But with free resources from both Google and Moz, you can now see how you can begin to develop analytical capabilities of your own to base your assumptions on rather than accepting the norm. SEO ranking factors change all the time and having your own analytical tools to conduct your own tests and experiments on will help give you credibility and perhaps even a unique insight on something hitherto unknown. Google provide you with a healthy free quota to obtain search results from. If you need more than the 2,500 rows/month Moz provide for free there are numerous paid-for plans you can purchase. MySQL is a free download and R is also a free package for statistical analysis (and much more). Go explore! Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger SEO Analytics for Free - Combining Google Search with the Moz API

0 Comments

Posted by SamuelMangialavori It's undeniable that the SERPs have changed considerably in the last year or so. Elements like featured snippets, Knowledge Graphs, local packs, and People Also Ask have really taken over the SEO world — and left some of us a bit confused. In particular, the People Also Ask (PAA) feature caught my attention in the last few months. For many of the clients I've worked with, PAAs have really had an impact on their SERPs. If you are anything like me, you might be asking yourself the same questions:

The first part of the post focuses on five things I've learned about People Also Ask, while the second part outlines some ideas on how to take advantage of such features. Let’s get started! Here are five things you should know about PAAs. 1. PAA can occupy different positions on the SERPI don’t know about you all, but I wasn't fully aware of the above until a few months ago; I just assumed that most of the time PAAs appeared in the same location, IF and only IF it was actually triggered by Google. I didn't really pay attention to this featured until I started digging into it. Distinct from featured snippets (which appear always at the top of the SERP), PAAs can be located in several different parts of the page. Let’s look at some examples: Keyword example: [dj software]

For the keyword [dj software], this is what the SERP looks like:

Keyword example: [cocktail dresses under 50 pounds]

For the keyword [cocktail dresses under 50 pounds], this is what the SERP looks like:

Keyword example: [tv unit]

For the keyword [tv unit], this is what the SERP looks like:

Why does this matter to you?Understanding the implications of the different positions of PAA in the SERPs impacts organic results’ CTR, especially on mobile, where space is very precious. 2. Do PAAs have a limit?I'm just giving away the answer now: No-ish. This feature has the ability to trigger a potentially infinite number of questions on the topic of interest. As Britney Muller researched in this Moz post, the initial 3–4 listing could continue into the hundreds once clicked on, in some cases. With one simple click, the 4 PAA questions can trigger three more listings, and so on and so forth. Has the situation changed at all since the original 2016 Moz article?Yes, it has! What I'm seeing now is actually very mixed: PAAs can vary extensively, from a fixed number of 3–4 listings to a plethora of results. Let’s look at an example of a query that's showing a large number of PAAs: Keyword example: [featured snippets]

For the query [featured snippets], the PAA listings can be expanded if clicked on, which process generates a large number of new PAA listings that appear at the bottom of such SERP feature. For other queries, Google will only show you 4 PAA listings and such number will not change even if the listings get clicked on: Keyword example: [best italian wine]

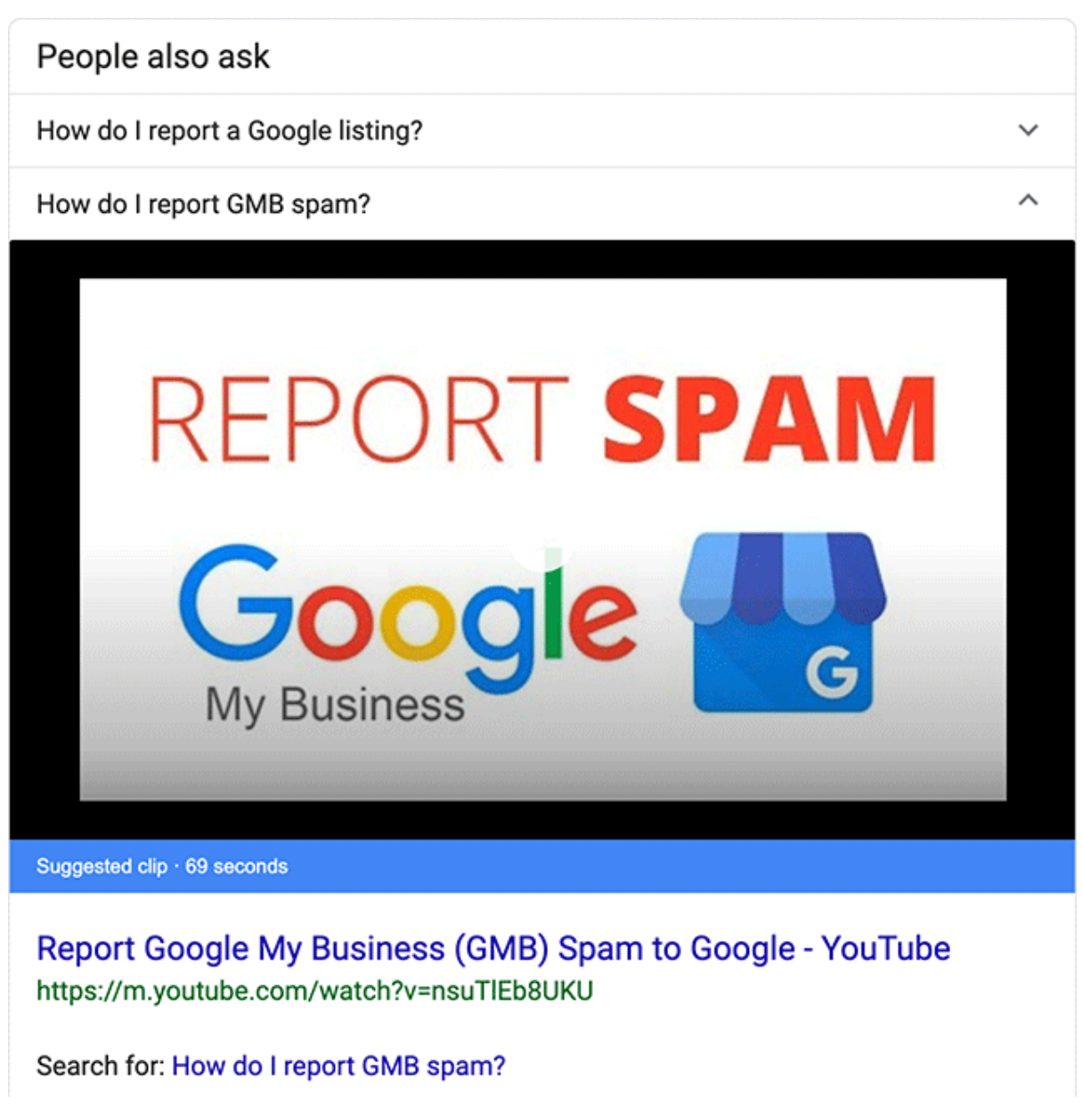

For the query [best italian wine], the PAA listings cannot be expanded, no matter how many times you hover or click on them. Interestingly, it also appears that Google does not keep this feature consistent: a few days after I took the above screenshots, the fixed number of PAAs was gone. On the other hand, I've recently seen instances where the keywords have a fixed amount of only 3 PAAs instead of 4. Now, the real question for Google would be: “What methodology are they using to decide which keywords trigger an infinite amount of PAAs and which keywords cannot?” As you might have guessed by now, I don’t have an answer today. I'll continue to work on uncovering it and keep you folks posted when/if I get an answer from Google or discover further insights. My two cents on the above:The number of PAAs does not relate to particular verticals or keywords patterns at the moment, though this may change in the future (e.g. comparative keywords more or less inclined to a fixed amount of PAAs.) Google’s experiments will continue, and they may change PAAs quite a bit in the next one to two years. I wouldn't be surprised if we saw questions being answered in different ways. Read the next point to know more! Why does this matter to you?From an opportunity standpoint, the number of questions you can scrape to take advantage of will vary. From a user standpoint, it impacts your search journey and offers a different number of answers to your questions. 3. PAAs can trigger video resultsI came across this by reading an article on Search Engine Roundtable.

I wasn't able to replicate the above result myself in London — but that doesn't matter, as we're used to seeing Google experimenting with new features in the US first. Answering a PAA listing with a video makes a lot of sense, especially if you consider the nature of many of the queries listed:

And so on. I expect this to be tested more and more by Google, to a point where most of the keywords that are currently showing video results in the SERPs will trigger video results in the PAA listings, too. Keyword example: [how to clean suede shoes diy]

Video results will matter more and more in the near future. Why is that? Just examine how hard Google is working on the interpretation and simplification of video results. Google has added key moments for videos in search results (read this article to know more). This new feature allows us to jump to the portion of the video that answers our specific query. Why does this matter to you?From an opportunity standpoint, you can optimize your YouTube and video results to be eligible to appear in PAAs. From a user standpoint, it enriches your search journey for PAA queries that are better answered with videos. 4. PAA questions are frequently repeated for the same search topic and also trigger featured snippetsThis might be obvious, but it's important to understand these three points:

Let’s look at some examples to better visualize what I mean: 1. PAA questions also trigger Featured SnippetsKeyword 1: [business card ideas] Keyword 2: [what is on a good business card?]

The keyword [business card ideas] triggers some PAA listings, whose questions, if used as the main query, trigger a featured snippet. 2. Different keywords can trigger the same PAA question and show the same result.The same listing that appears for a PAA question for keyword X can also appear for the same question, triggered by a different keyword Y. Keyword 1: [quality business cards] Keyword 2: [business cards quality design]

To summarize: Different keywords, same question in the PAA and same listing in the PAA. 3. Different questions listed in a PAA triggered by different keywords can show the same result.The same listing that appears for a PAA question for keyword X can also appear for the same question, triggered by a different keyword Y. Keyword 1: [quality business cards] Keyword 2: [best business cards online]

To summarize: Different keywords, different question in the PAA but same listing in the PAA. The above keywords are clearly different, but they show the same intent: “I'm looking for a business card by using terms that highlight certain defining attributes — best & quality.” Small Biz Trends in the above screenshot has created a page that matches that particular intent. Keyword intent is a crucial topic that the SEO community has been talking about for a few years by now. Why does this matter to you?From an opportunity standpoint, your PAA listings can trigger featured snippets and also have the possibility to cover a portfolio of different keyword permutations. 5. PAAs have a feedback featureMost of you have probably glanced over this feature but never really paid attention to it: at the bottom of the last PAA listing, there is often a little hyperlink with the word Feedback. By clicking on it, you're shown the following pop-up:

Google states that this option is available “on some search results” and it allows users to send feedback or suggest a translation. Even if you do go through the effort, Google says they will not reply to you directly, but rather collect the info submitted and work on the accuracy of the listings. Does this mean they'll actually change the PAA listing based off of feedback?Unfortunately, I don’t have an answer for this (I've tried to submit feedback manually and nothing really happened) but I think it's very unlikely. The only for-sure thing you get from Google is the following response:

Why does this matter to you?From an opportunity standpoint, if you notice that PAA listings (for questions you are trying to appear for) are not accurate, you can flag it to Google and hope they'll change it. Now that we've covered some interesting facts, how can we take advantage of PAA? Determine how deeply your SERP is being affected by PAA (and other SERP features)This task is fairly straightforward, but I guarantee you very few people actually pay much attention to it. When monitoring your rankings, you should really try to dig deeply into which other elements are affecting your overall organic traffic & organic CTR. Start by asking yourself the following questions:

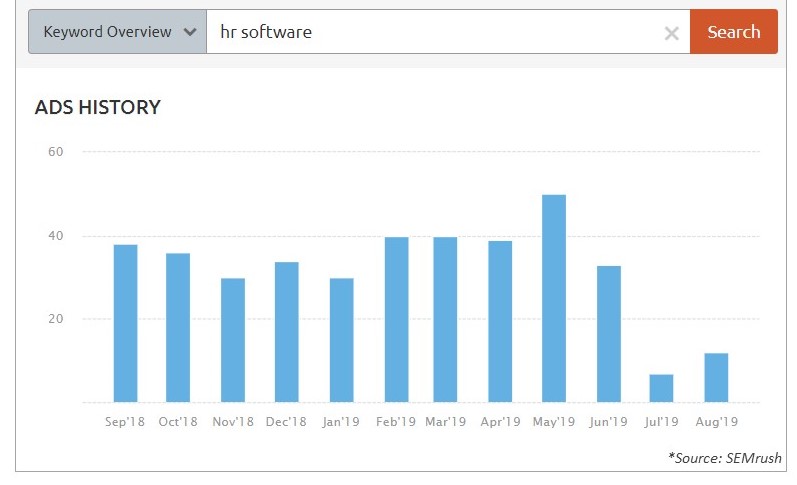

You might spot an increasing amount of paid results (in the form of shopping ads for products or text ads for services) appearing for many of your key terms. Established tools like SEMrush, Sistrix, and Ahrefs can show you the number of ads, overall spending, & how the ads look at a keyword level. Kw: [hr software]

Or it may be the case that organic SERP elements, such as video results, are being triggered in the SERP for many of your informational queries, or that featured snippets appear for a high percentage of your navigational & transactional terms, and so on. Recently, I came across a client where over 90% of their primary keywords triggered PAAs at the top of the SERP. 90%! Which tools can help?At Distilled we use STAT, which reports on such insights in a really comprehensive manner with a great overview of all the SERP elements. This is what the STAT SERP features interface looks like:

Ahrefs also does a great job of allowing you to download the SERP features of the top twenty results for any of the keywords you're interested in. Understanding where you stand in the current SERP landscape & how your SEO has been affected by it is a crucial step prior to implementing any SERP strategy. Tactics to take advantage of PAAsThere are several ways to incorporate PAAs into your SEO strategy. It's already been written about many times online, so I'm going to keep it simple and focus on a few easy tactics that I think will really improve your workflow: 1. Extract PAA listingsThis one's pretty straightforward: how can we take advantage of PAAs if we cannot find a way to extract those questions in the first place? There are several ways to “scrape” PAAs, more or less compliant with Google’s Terms & Conditions (such as using Screaming Frog). Personally, I like STAT’s report, so I'll talk about how easy it is to extract PAA listings using this tool:

This is an example of how the report will look like after you've downloaded the “People also ask (Google)” report:

2. Address questions in your contentOnce you have a list of all PAA questions and you are able to see which URLs rank for such results, what should you do next? This is the more complicated part: think how your content strategy can incorporate PAA findings and start experimenting. Similarly to featured snippets, PAAs should be included in your content plan. If that's not yet the case, well, I hope this blog post can convince you to give it a go! Since I am not focusing (sadly, for some) on content strategy with this article, I will not dwell on the topic too much. Instead, I'll share a few tips on what you could do with the data gathered so far: Understand what type of results such PAA questions are triggering: are they informational, navigational, transactional?Many people think featured snippets and PAA questions are triggered by heavily informational or Q&A pages: trust me, do NOT assume anything. heck your data and behave accordingly. Keyword intent should never be taken for granted. Create or re-optimize your contentDepending on the findings in the previous point, it may be a matter of creating new content that can address PAA questions or re-optimizing the existing content on your site. If you discover that you have a chance at ranking in a PAA with your current transactional/editorial pages, it might be best to re-optimize what you have. It may also be the case that one of the following options can be enough to rank in PAAs:

If you do not have any content to cover a certain keyword theme, think about creating new ones that would match the keyword intent that Google is favoring. Editorial content with SEO in mind (don’t limit yourself to PAA, but look at the overall SERP spectrum) or simple FAQs pages could really help win PAA or featured snippets. Depending on your KPIs (traffic, leads, signups, etc), tailor your newly optimized content and be ready to retain users on your siteOnce users land on your site after clicking on a PAA listing, what do you want them to see/do? Don’t do half the job, worry about the entire user journey from the start! 3. Test schema on your pageThe SEO community has gone a bit cray-cray over the new FAQs schema — my colleague Emily Potter wrote a great post on it. FAQs and how-to schema represent an interesting opportunity for SERP features such as featured snippets and PAAs, so why not give it a go? Having the right content & testing the right type of schema may help you win precious snippets or PAAs. In the future, I expect Google to increase the amount of markup that refers to informational queries, so stay tuned — and test, test, and test some more! Think of the extended search volume opportunityWithout digging too much into this topic (it deserves a post on its own), I've been thinking about the following idea quite a lot recently: What if we started looking at PAAs as organic listings, hence counting the search volume for the keywords that trigger such PAAs? Since PAAs and other elements have been redefining the SERPs as we know them, maybe it's time for us marketers to redefine how these features are impacting our organic results. Maybe it's time for us to consider the extended search opportunity that such features bring to the table and not limit ourselves at the tactics mentioned above. Just something to think about! PAA can be your friendBy now, I hope you've learned a bit more about People Also Ask and how it can help your SEO strategy moving forward. PAA can be your friend indeed if you're willing to spend time understanding how your organic visibility can be influenced by such features. The fact that PAAs are now popular for a large portfolio of queries makes me think Google considers them a new, key part of the user journey. With voice search on the rise, I expect Google to pay even more attention to elements like featured snippets and People Also Ask. I don't think they're going anywhere soon — so my dear fellow SEOs, you should start optimizing for the SERPs starting today! Feel free to get in touch with us at Distilled or on Twitter at @SamuelMng to discuss this further, or just have a chat about who these people who also ask so many questions actually are... Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger 5 Things You Should Know About "People Also Ask" & How to Take Advantage Posted by Cyrus-Shepard A good, solid competitive analysis can provide you with priceless insights into what's working for other folks in your industry, but it's not always easy to do right. In this week's edition of Whiteboard Friday, Cyrus walks you through how to perform a full competitive analysis, including:

Plus, don't miss the handy tips on which tools can help with this process and our brand-new guide (with free template) on SEO competitive analysis. Give it a watch and let us know your own favorite tips for performing a competitive analysis in the comments!

Video TranscriptionHowdy, Moz fans. Welcome to another edition of Whiteboard Friday. I'm Cyrus Shepard. Today we're talking about a really cool topic — competitive analysis. This is an introduction to competitive analysis. What is competitive analysis for SEO?It's basically stealing your competitors' traffic. If you're new to SEO or you've been around awhile, this is a very valuable tactic to earn more traffic and rankings for your site. Instead of researching blindly what to go after, competitive analysis can tell you certain things with a high degree of accuracy that you won't find other ways, such as:

How to do an SEO competitive analysisHow does it do this? Well, instead of researching just in a keyword tool or a link tool, with competitive analysis you look at what's actually working for your competitors and use those tactics for yourself.

This often works so much better than the old-style ways of research, because you can actually improve upon what other people are actually doing and make those tactics work for you. 1. Identify your top competitorsSo to get started with competitive analysis, the first challenge is to actually identify your top competitors.

This sounds easy. You probably think you know who your competitors are because you type a keyword into Google and you see who's ranking for your desired keyword. This does work, to certain degree. Another way to do it is to look at the keywords you rank for, because the challenge is you probably rank for far more keywords than you believe you do. Moz, for instance, ranks for hundreds of thousands or possibly even millions of keywords, and we want to know at scale who are all the competitors ranking for all those different queries. This is very hard to do manually. Fortunately there are a lot of SEO tools out there — Ahrefs, SEMrush — many tools that can tell you look at all the keywords that you rank for across thousands of SERPs and then calculate, using advanced metrics, exactly who your true competitors are. I'm happy to announce that Moz just released a tool that does exactly this. We're going to link to it in the transcript below. It's called Domain Analysis. It's a free tool. Anybody can use it.

You simply type in your domain, and we look through all the keywords that your site ranks for in our database, we look at all the competitors, and we use some advanced heuristics and we match those up and we tell you who your true competitors are. Once you know your true competitors, you can continue with the rest of the analysis. 2. Perform a keyword gap analysis

The first step that most people take in doing an SEO competitive analysis is identifying the keyword gap. Now for a long time, when I was new to SEO, I heard this term "keyword gap" and I didn't really know what it meant. But it's actually really simple. It's simply what keywords do my competitors rank for that I don't rank for, and that's the gap. The idea is that we want to close that gap if the keyword is valuable or high volume. The trick is you can do this on your own manually. You can see all the keywords you rank for using an advanced keyword tool and then list all the keywords your competitors rank for and then combine those lists in Excel. It's a long, tedious process. Fortunately, again, major SEO tools, such as Moz, can do this at scale for you within seconds. If you go to Moz Keyword Explorer, you simply enter your domain, enter your top competitor's domain that we found in this first step, and it will list all the keywords that your competitors rank for that you don't rank for.

You can then pull this into a spreadsheet and find keywords with high volume or keywords that are valuable and relevant to your business. This is an important point. You don't just want to go willy-nilly after any keyword your competitor ranks for. You want to actually find the keywords that are relevant to your business. 3. Perform a link gap analysisSo after you do that, we also have the cousin of a keyword gap analysis — link gap analysis.

This is a very similar concept, because you need links to rank. But where do you find the links? So you want to ask, "Who links to my competitors but does not link to me?" The theory here is that if someone is linking to your competitor on a similar topic, they are more likely to link to you because they are in that business of linking out to that type of content. An advanced tip is you often want to look at two or more competitors. The idea is that if someone is linking to multiple sources but not to you, it's more likely they'll link to you if you have superior content. Again, SEO tools can provide something like this. You can list all the backlinks to yourself or your competitors and combine them in a spreadsheet. But the tools make it much easier. In Moz's Link Explorer, you simply enter your competitor, you enter another competitor and yours, and you can find all the people who are linking to those competitors but not to you.

An advanced tip that I like to use is do it at the page level. Don't look for domains that are linking to your competitors. Look for specific pages and you can do this in Link Explorer. We're going to show you in a little more detail in a guide I'm going to link to at the bottom of this post. 4. Perform a top content analysisSo we understand links, we understand the keywords. But what content do we want to create?

The idea is if other people are linking to these things, then it's highly probable that you can earn links with similar but better content. So the idea is you go to a tool like Link Explorer. You can sort by top pages, and you pick out the content that has the most links for your competitor. Then don't just re-create the content, but make it better. This is called the skyscraper technique, the idea of finding content that does really well and then making it better. Then once you have this, you go back to your link gap analysis and you reach out to those people who are linking to that content and you ask them for links, showing them the better content. So that's it in a nutshell. When we put it all together, we have a very valuable process. We can go back to our individual pages, look at those pages that are ranking for our competitors. When you're all done, you can actually take your page, plug it into your keyword gap, and see all the keywords the page is ranking for. Our original keyword gap analysis looked at the domain, but now we just want to know what the page is ranking for. We can add that into our own page and make the page even better. We can again reach out to the same people who are linking to this page, show them our better content, and that is the process. New Guide & Free TemplateWhew, I'm exhausted. This is a huge process. I went over it really quickly. Fortunately, if it went by a little fast for you, we just released a guide, "An Introduction to SEO Competitive Analysis." We're going to link to it.

It explains all these processes in much more detail. It's free to use. I hope you enjoy it. Hey, I really enjoyed making this video. If you found value in it, give it a thumbs up. Please share on social media and we'll talk to you next time. Thanks, everybody. Video transcription by Speechpad.com Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger Intro to SEO Competitive Analysis 101 - Whiteboard Friday Posted by MiriamEllis This post contains an excerpt from our new primer: The Practical Guide to Franchise Marketing.

Planet Fitness, Great Clips, Ace Hardware… you can imagine the sense of achievement the leadership of these famous franchises must enjoy in making it to the top of lists like Entrepreneur’s 500. Behind the scenes of success, all competitive franchisors and franchisees have had to manage a major shift — one that centers on customers and their radically altered consumer journeys. Research online, buy offline. Always-on laptops and constant companion smartphones are where fingers do the walking now, before feet cross the franchise threshold. Statistics tell the story of a public that searches online prior to the 90% of purchases they still make in physical stores. And while opportunity abounds, “being there” for the customers wherever they are in their journey has presented unique challenges for franchises. Who manages which stage of the journey? Franchisor or franchisee? Getting it right means meeting new shopping habits head-on, and re-establishing clear sight-lines and guidelines for all contributors to the franchise’s ultimate success. Over the next few weeks, we’ll be publishing a series of articles dedicated to franchises. Want all the info now? Download The Practical Guide to Franchise Marketing: Seeing the ShiftWhoever your franchise’s customers are, demographically, we can tell you one thing: they aren’t buying the same way they were ten, or even five years ago. For one thing, they used to decide to buy at your business as they browsed shelves or a menu. Now, 82% of smartphone users consult their devices before making an in-store purchase. Thank you, digital marketing! Traditionally, online marketing wasn’t something that franchisees had to think much about. And that was sort of a good thing because everyone knew their lane.

Then people started shopping differently and traditional lanes began merging. Customers started using online directories to get information. They started using online listings for discovering local businesses “near me” on a map. They started reading online reviews to make choices. They started browsing online inventories or menus in advance. They started using cell phones to make reservations, click to call you, or to get a digital voice assistant like Siri or Alexa to give them directions to the nearest and best local option. Suddenly, what used to be a “worldwide” resource — the internet — began to be a local resource, too. And a really powerful one. People were finding, choosing, and building relationships online not just with the national brand, but with local shops, services and restaurants, often making choices in advance and showing up merely to purchase the products or services they want. Stats State the CaseConsider how these statistics are impacting every franchise:

These are huge changes — and not ones the franchise model was entirely ready for. There used to be a clear geographic split between a franchise’s corporate awareness marketing and franchisee local sales marketing that was easy to understand. But the above statistics tell new tales. Now there is an immediacy and urgency to the way customers search and shop that’s blurring old lines. Ace is the place with the helpful hardware folksEven a memorable jingle like this one goes nowhere unless the franchisor/franchisee partnership is solid. How do customers know a brand like Ace stands by its slogan when they see a national TV campaign like this one which strives to distinguish the franchise from understaffed big box home improvement stores? Customers feel the nation-wide promise come true as soon as they walk into an Ace location:

A brand promo only works when all sides are equally committed to making each location of the business visible, accessible, and trusted. This joint effort applies to every aspect of how the business is marketed. From leadership to door greeter, everyone has a role to play. It’s defining those roles that can make or break the brand in the new consumer environment. We’ll be exploring the nuts and bolts of building ideal partnerships in future installments of this series. Up next is The Unique World of Franchise Marketing. Keep an eye out for it on the blog at the end of the month! Don’t want to wait for the blog posts to come out? Download your copy now of our comprehensive look at unique franchise challenges and benefits: Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger Franchise Marketing: How People Buy Now Posted by Cyrus-Shepard When Craig Bradford of Distilled reached out and asked if we'd like to run some SEO experiments on Moz using DistilledODN, our reply was an immediate "Yes please!" If you're not familiar with DistilledODN, it's a sophisticated platform that allows you to do a number of cool things in the SEO space:

When you find something that works, you see a positive result like this:

SEO experimentation is great, but almost nobody does it right because it's impossible to control for other factors. Yes, you updated your title tags, but did Google roll out an update today? Sure, you sped up your site, but did a bunch of spam just link to you? A/B split testing solves this problem by applying your changes to only a portion of your pages — typically 50% — and measuring the difference between the two groups. Fortunately, the ODN can deploy these changes near-instantly, up to thousands of pages at a time. It then crunches the numbers and tells you what's working, or not. Testing Google's UGC link attributeFor our first test, we decided to tackle something simple and fast. Craig suggested looking at Google's new link attributes, and we were off! To summarize: Google recently introduced new link attributes for webmasters/SEOs to label links. Those attributes are:

On the Moz blog, all comments links are currently marked "nofollow" — following years of SEO best practices. Google has stated that using the new attributes won't give you a rankings boost. That said, we wanted to test for ourselves if changing these links to "ugc" would impact the rankings/traffic of our blog pages. Here's an example of a comment the ODN modified.

After we set the test running, 50% of blog posts had comments with "ugc" links, while 50% kept their original "nofollow" attributes. Experiment resultsWe expected a "null" test — meaning we wouldn't see a significant impact. In fact, that's exactly what happened.

If we detected a significant change, the probability cone at the bottom right would have pointed more dramatically up or down. In fact, at a 95% confidence interval, the test predicted traffic would either fall 26,000 visits/month or gain 9,300 visits/month. Hence, a null result. This validates Google's statements that using the "ugc" attribute won't give you a ranking boost. What should Moz test next?While "null" tests aren't as fun as a positive result, we have a lot of cool A/B SEO testing ahead of us. The great thing is we can now test out changes with the ODN, and when we find one that works, pass that to our developers to make the changes permanently. This cuts down on needless development work and stops the guessing game. We have a Trello board set up for test ideas, and we'd love to add some community ideas to the mix. The ODN is currently running on the Moz Blog and Q&A, so anything in these site sections is fair game. We're also looking at experiments where we use Moz data to inform these decisions. For example, a Moz Pro crawl identified that the Moz Blog titles currently use H2 tags instead of H1. Google recently indicated this likely shouldn't impact rankings, but wouldn't it be good to test?

What wild/clever/ridiculous/obvious SEO things should we test? With each good test, we'll publish the results. Leave your ideas in the comments below. Big thanks to the Distilled Team, including Will Critchlow and Tom Anthony, for embarking on this journey with us. And if you'd like to learn more about DistilledODN and SEO split testing in general, this post is highly recommended. Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger New SEO Experiments: A/B Split Testing Google's UGC Attribute Posted by cml63 A trend we’ve been noticing at Go Fish Digital is that more and more of our clients have been using the Shopify platform. While we initially thought this was just a coincidence, we can see that the data tells a different story:

The Shopify platform is now more popular than ever. Looking at BuiltWith usage statistics, we can see that usage of the CMS has more than doubled since July 2017. Currently, 4.47% of the top 10,000 sites are using Shopify. Since we’ve worked with a good amount of Shopify stores, we wanted to share our process for common SEO improvements we help our clients with. The guide below should outline some common adjustments we make on Shopify stores. What is Shopify SEO?Shopify SEO simply means SEO improvements that are more unique to Shopify than other sites. While Shopify stores come with some useful things for SEO, such as a blog and the ability to redirect, it can also create SEO issues such as duplicate content. Some of the most common Shopify SEO recommendations are:

We’ll go into how we handle each of these recommendations below: Duplicate contentIn terms of SEO, duplicate content is the highest priority issue we’ve seen created by Shopify. Duplicate content occurs when either duplicate or similar content exists on two separate URLs. This creates issues for search engines as they might not be able to determine which of the two pages should be the canonical version. On top of this, often times link signals are split between the pages. We’ve seen Shopify create duplicate content in several different ways:

Duplicate product pagesShopify creates this issue within their product pages. By default, Shopify stores allow their /products/ pages to render at two different URL paths:

Shopify accounts for this by ensuring that all /collections/.*/products/ pages include a canonical tag to the associated /products/ page. Notice how the URL in the address differs from the “canonical” field:

While this certainly helps Google consolidate the duplicate content, a more alarming issue occurs when you look at the internal linking structure. By default, Shopify will link to the non-canonical version of all of your product pages.

Thus, Shopify creates your entire site architecture around non-canonical links by default. This creates a high-priority SEO issue because the website is sending Google conflicting signals:

While canonical tags are usually respected, remember Google does treat these as hints instead of directives. This means that you’re relying on Google to make a judgement about whether or not the content is duplicate each time that it crawls these pages. We prefer not to leave this up to chance, especially when dealing with content at scale. Adjusting internal linking structureFortunately, there is a relatively easy fix for this. We’ve been able to work with our dev team to adjust the code in the product.grid-item.liquid file. Following those instructions will allow your Shopify site’s collections pages to point to the canonical /product/ URLs. Duplicate collections pagesAs well, we’ve seen many Shopify sites that create duplicate content through the site’s pagination. More specifically, a duplicate is created of the first collections page in a particular series. This is because once you're on a paginated URL in a series, the link to the first page will contain “?page=1”:

However, this will almost always be a duplicate page. A URL with “?page=1” will almost always contain the same content as the original non-parameterized URL. Once again, we recommend having a developer adjust the internal linking structure so that the first paginated result points to the canonical page. Product variant pagesWhile this is technically an extension of Shopify’s duplicate content from above, we thought this warranted its own section because this isn’t necessarily always an SEO issue. It’s not uncommon to see Shopify stores where multiple product URLs are created for the same product with slight variations. In this case, this can create duplicate content issues as often times the core product is the same, but only a slight attribute (color for instance) changes. This means that multiple pages can exist with duplicate/similar product descriptions and images. Here is an example of duplicate pages created by a variant: https://recordit.co/x6YRPkCDqG If left alone, this once again creates an instance of duplicate content. However, variant URLs do not have to be an SEO issue. In fact, some sites could benefit from these URLs as they allow you to have indexable pages that could be optimized for very specific terms. Whether or not these are beneficial is going to differ on every site. Some key questions to ask yourself are:

For a more in-depth guide, Jenny Halasz wrote a great article on determining the best course of action for product variations. If your Shopify store contains product variants, than it’s worth determining early on whether or not these pages should exist at a separate URL. If they should, then you should create unique content for every one and optimize each for that variant’s target keywords. Crawling and indexingAfter analyzing quite a few Shopify stores, we’ve found some SEO items that are unique to Shopify when it comes to crawling and indexing. Since this is very often an important component of e-commerce SEO, we thought it would be good to share the ones that apply to Shopify. Robots.txt fileA very important note is that in Shopify stores, you cannot adjust the robots.txt file. This is stated in their official help documentation. While you can add the “noindex” to pages through the theme.liquid, this is not as helpful if you want to prevent Google from crawling your content all together.

Here are some sections of the site that Shopify will disallow crawling in:

While it's nice that Shopify creates some default disallow commands for you, the fact that you cannot adjust the robots.txt file can be very limiting. The robots.txt is probably the easiest way to control Google’s crawl of your site as it's extremely easy to update and allows for a lot of flexibility. You might need to try other methods of adjusting Google’s crawl such as “nofollow” or canonical tags. Adding the “noindex” tagWhile you cannot adjust the robots.txt, Shopify does allow you to add the “noindex” tag. You can exclude a specific page from the index by adding the following code to your theme.liquid file. As well, if you want to exclude an entire template, you can use this code: RedirectsShopify does allow you to implement redirects out-of-the-box, which is great. You can use this for consolidating old/expired pages or any other content that no longer exists. You can do this by going to Online Store > Navigation > URL Redirects. So far, we havn't found a way to implement global redirects via Shopify. This means that your redirects will likely need to be 1:1. Log filesSimilar to the robots.txt, it’s important to note that Shopify does not provide you with log file information. This has been confirmed by Shopify support. Structured dataProduct structured dataOverall, Shopify does a pretty good job with structured data. Many Shopify themes should contain “Product” markup out-of-the-box that provides Google with key information such as your product’s name, description, price etc. This is probably the highest priority structured data to have on any e-commerce site, so it’s great that many themes do this for you. Shopify sites might also benefit from expanding the Product structured data to collections pages as well. This involves adding the Product structured data to define each individual product link in a product listing page. The good folks at Distilled recommend including this structured data on category pages.

Article structured dataAs well, if you use Shopify’s blog functionality, you should use “Article” structured data. This is a fantastic schema type that lets Google know that your blog content is more editorial in nature. We’ve seen that Google seems to pull content with “Article” structured data into platforms such as Google Discover and the “Interesting Finds” sections in the SERPs. Ensuring your content contains this structured data may increase the chances your site’s content is included in these sections. BreadcrumbList structured dataFinally, one addition that we routinely add to Shopify sites are breadcrumb internal links with BreadcrumbList structured data. We believe breadcrumbs are crucial to any e-commerce site, as they provide users with easy-to-use internal links that indicate where they’re at within the hierarchy of a website. As well, these breadcrumbs can help Google better understand the website’s structure. We typically suggest adding site breadcrumbs to Shopify sites and marking those up with BreadcrumbList structured data to help Google better understand those internal links. Keyword researchPerforming keyword research for Shopify stores will be very similar to the research you would perform for other e-commerce stores. Some general ways to generate keywords are:

Keyword optimizationSimilar to Yoast SEO, Shopify does allow you to optimize key elements such as your title tags, meta descriptions, and URLs. Where possible, you should be using your target keywords in these elements. To adjust these elements, you simply need to navigate to the page you wish to adjust and scroll down to “Search Engine Listing Preview”:

Adding content to product pagesIf you decide that each individual product should be indexed, ideally you’ll want to add unique content to each page. Initially, your Shopify products may not have unique on-page content associated with them. This is a common issue for Shopify stores, as oftentimes the same descriptions are used across multiple products or no descriptions are present. Adding product descriptions with on-page best practices will give your products the best chance of ranking in the SERPs. However, we understand that it’s time-consuming to create unique content for every product that you offer. With clients in the past, we’ve taken a targeted approach as to which products to optimize first. We like to use the “Sales By Product” report which can help prioritize which are the most important products to start adding content to. You can find this report in Analytics > Dashboard > Top Products By Units Sold.

Shopify blogShopify does include the ability to create a blog, but we often see this missing from a large number of Shopify stores. It makes sense, as revenue is the primary goal of an e-commerce site, so the initial build of the site is product-focused. However, we live in an era where it’s getting harder and harder to rank product pages in Google. For instance, the below screenshot illustrates the top 3 organic results for the term “cloth diapers”:

While many would assume that this is primarily a transactional query, we’re seeing Google is ranking two articles and a single product listing page in the top three results. This is just one instance of a major trend we’ve seen where Google is starting to prefer to rank more informational content above transactional. By excluding a blog from a Shopify store, we think this results in a huge missed opportunity for many businesses. The inclusion of a blog allows you to have a natural place where you can create this informational content. If you’re seeing that Google is ranking more blog/article types of content for the keywords mapped to your Shopify store, your best bet is to go out and create that content yourself. If you run a Shopify store (or any e-commerce site), we would urge you to take the following few steps:

As an example, we have a client that was interested in ranking for the term “CRM software,” an extremely competitive keyword. When analyzing the SERPs, we found that Google was ranking primarily informational pages about “What Is CRM Software?” Since they only had a product page that highlighted their specific CRM, we suggested the client create a more informational page that talked generally about what CRM software is and the benefits it provides. After creating and optimizing the page, we soon saw a significant increase in organic traffic (credit to Ally Mickler):

The issue that we see on many Shopify sites is that there is very little focus on informational pages despite the fact that those perform well in the search engines. Most Shopify sites should be using the blogging platform, as this will provide an avenue to create informational content that will result in organic traffic and revenue. AppsSimilar to WordPress’s plugins, Shopify offers “Apps” that allow you to add advanced functionality to your site without having to manually adjust the code. However, unlike WordPress, most of the Shopify Apps you’ll find are paid. This will require either a one-time or monthly fee. Shopify apps for SEOWhile your best bet is likely teaming up with a developer who's comfortable with Shopify, here are some Shopify apps that can help improve the SEO of your site.

Is Yoast SEO available for Shopify?Yoast SEO is exclusively a WordPress plugin. There is currently no Yoast SEO Shopify App. Limiting your Shopify appsSimilar to WordPress plugins, Shopify apps will inject additional code onto your site. This means that adding a large number of apps can slow down the site. Shopify sites are especially susceptible to bloat, as many apps are focused on improving conversions. Often times, these apps will add more JavaScript and CSS files which can hurt page load times. You’ll want to be sure that you regularly audit the apps you’re using and remove any that are not adding value or being utilized by the site. Client resultsWe’ve seen pretty good success in our clients that use Shopify stores. Below you can find some of the results we’ve been able to achieve for them. However, please note that these case studies do not just include the recommendations above. For these clients, we have used a combination of some of the recommendations outlined above as well as other SEO initiatives. In one example, we worked with a Shopify store that was interested in ranking for very competitive terms surrounding the main product their store focused on. We evaluated their top performing products in the “Sales by product” report. This resulted in a large effort to work with the client to add new content to their product pages as they were not initially optimized. This combined with other initiatives has helped improve their first page rankings by 113 keywords (credit to Jennifer Wright & LaRhonda Sparrow).

In another instance, a client came to us with an issue that they were not ranking for their branded keywords. Instead, third-party retailers that also carried their products were often outranking them. We worked with them to adjust their internal linking structure to point to the canonical pages instead of the duplicate pages created by Shopify. We also optimized their content to better utilize the branded terminology on relevant pages. As a result, they’ve seen a nice increase in overall rankings in just several months time.

Moving forwardAs Shopify usage continues to grow, it will be increasingly important to understand the SEO implications that come with the platform. Hopefully, this guide has provided you with additional knowledge that will help make your Shopify store stronger in the search engines. Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger Shopify SEO: The Guide to Optimizing Shopify Posted by BritneyMuller Featured snippets are still the best way to take up primo SERP real estate, and they seem to be changing all the time. Today, Britney Muller shares the results of the latest Moz research into featured snippet trends and data, plus some fantastic tips and tricks for winning your own. (And we just can't resist — if this whets your appetite for all things featured snippet, save your spot in Britney's upcoming webinar with even more exclusive data and takeaways!)

Video TranscriptionHey, Moz fans. Welcome to another edition of Whiteboard Friday. Today we're talking about all things featured snippets, so what are they, what sort of research have we discovered about them recently, and what can you take back to the office to target them and effectively basically steal in search results. What is a featured snippet?So to be clear, what is a featured snippet? If you were to do a search for "are crocs edible," you would see a featured snippet like this:

Essentially, it's giving you information about your search and citing a website. This isn't to be confused with an answer box, where it's just an answer and there's no citation. If you were to search how many days are in February, Google will probably just tell you 28 and there's no citation. That's an answer box as opposed to a featured snippet. Need-to-know discoveries about featured snippetsNow what have we recently discovered about featured snippets? 23% of all search result pages include a featured snippetWell, we know that they're on 23% of all search result pages. That's wild. This is up over 165% since 2016.

We know that they're growing. There are 5 general types of featured snippets

We know that Google continues to provide more and more in different spaces, and we also know that there are five general types of featured snippets:

The most common that we see are the paragraph and the list. The list can come in numerical format or bullets. But we also see tables and then video. The video is interesting because it will just show a specific section of a video that it thinks you need to consume in order to get your answer, which is always interesting. Lately, we have started noticing accordions, and we're not sure if they're testing this or if it might be rolled out. But they're a lot like People Also Ask boxes in that they expand and almost show you additional featured snippets, which is fascinating. Paragraphs (50%) and lists (37%) are the most common types of featured snippetsAnother important thing to take away is that we know paragraphs and lists are the most common, and we can see that here. Fifty percent of all featured snippet results are paragraphs. Thirty-seven percent are lists. It's a ton. Then it kind of whittles down from there. Nine percent are tables, and then just under two percent are video and under two percent are accordion. Kind of good to know. Half of all featured snippets are part of a carouselInterestingly, half of all featured snippets are part of a carousel. What we mean by a carousel is when you see these sort of circular options within a featured snippet at the bottom.

People Also Ask boxes are on 93.8% of featured snippet SERPsWe also know that people also ask boxes are on 93.8% of featured snippet SERPs, meaning they're almost always present when there's a featured snippet, which is fascinating. I think there's a lot of good data we can get from these People Also Ask questions to kind of seed your keyword research and better understand what it is people are looking for. "Are Crocs supposed to be worn with socks?" It's a very important question. You have to understand this stuff. Informational sites are winningWe see that the sites that are providing finance information and educational information are doing extremely well in the featured snippet space. So again, something to keep in mind. Be a detective and test!

You should always be exploring the snippets that you might want to rank for.

Start being a detective and looking at all those things. So now to kind of the good stuff. How to win featured snippetsWhat is it that you can specifically do to potentially win a featured snippet? These are sort of the four boiled down steps I've come up with to help you with that. 1. Know which featured snippet keywords you rank on page one forSo number one is to know which featured snippet keywords your site already ranks for. It's really easy to do in Keyword Explorer at Moz.

So if you search by root domain and you just put in your website into Moz Keyword Explorer, it will show you all of the ranking keywords for that specific domain. From there, you can filter by ranking or by range, from 1 to 10:

What are those keywords that you currently rank 1 to 10 on? Then you add those keywords to a list. Once they populate in your list, you can filter by a featured snippet.

This is sort of the good stuff. This is your playground. This is where your opportunities are. It gets really fun from here. 2. Know your searchers' intentNumber two is to know your searchers' intent. If one of your keywords was "Halloween costume DIY" and the search result page was all video and images and content that was very visual, you have to provide visual content to compete with an intent like that. There's obviously an intent behind the search where people want to see what it is and help in that process. It's a big part of crafting content to rank in search results but also featured snippets. Know the intent. 3. Provide succinct answers and contentNumber three, provide succinct answers and content. Omit needless words. We see Google providing short, concise information, especially for voice results. We know that's the way to go, so I highly suggest doing that. 4. Monitor featured snippet targetsNumber four, monitor those featured snippet targets, whether you're actively trying to target them or you currently have them. STAT provides really, really great alerts. You can actually get an email notification if you lose or win a featured snippet. It's one of the easiest ways I've discovered to keep track of all of these things. Pro tip: Add a tl;dr summaryA pro tip is to add a "too long, didn't read" summary to your most popular pages. You already know the content that most people come to your site for or maybe the content that does the best in your conversions, whatever that might be. If you can provide summarized content about that page, just key takeaways or whatever that might be at the top or at the bottom, you could potentially rank for all sorts of featured snippets. So really, really cool, easy stuff to kind of play around with and test. Want more tips and tricks? We've got a webinar for that!Lastly, for more tips and tricks, you should totally sign up for the featured snippet webinar that we're doing. I'm hosting it in a couple weeks. I know spots are limited, but we'll be sharing all of the research that we've discovered and even more takeaways and tricks. So hopefully you enjoyed that, and I appreciate you watching this Whiteboard Friday. Keep me posted on any of your featured snippet battles or what you're trying to get or any struggles down below in the comments. I look forward to seeing you all again soon. Thank you so much for joining me. I'll see you next time. Video transcription by Speechpad.com Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger Featured Snippets: What to Know & How to Target - Whiteboard Friday Posted by Cyrus-Shepard If you want a quick overview of top SEO metrics for any domain, today we're officially launching a new free tool for you: Domain Analysis.

One thing Moz does extremely well is SEO data: data that consistently sets industry standards and is respected both for its size (35 trillion links, 500 million keyword corpus) and its accuracy. We're talking things like Domain Authority, Spam Score, Keyword Difficulty, and more, which are used by tens of thousands of SEOs across the globe. With Domain Analysis, we wanted to combine this data in one place, and quickly show it to people without the need of creating a login or signing up for an account. The tool is free, and showcases a preview of many top SEO metrics in one place, including:

Many of these metrics are previews that you can explore more in-depth using Moz tools such as Link Explorer and Keyword Explorer. New experimental metricsDomain Analysis includes a number of new, experimental metrics not available anywhere else. These are metrics developed by our search scientist Dr. Pete Meyers that we're interested in exploring because we believe they are useful to SEO. Those metrics include: Keywords by Estimated ClicksYou know your competitor ranks #1 for a keyword, but how many clicks does that generate for them? Keywords by Estimate Clicks uses ranking position, search volume, and estimated click-through rate (CTR) to estimate just how many clicks each keyword generates for that website. Top Featured SnippetsSearch results with featured snippets can be very different than those without, as whoever "wins" the featured snippet at position zero can expect outsized clicks and attention. These are potentially valuable keywords. Top Featured Snippets tells you which keywords a site ranks for that triggers a featured snippet, and also whether or not that site owns the snippet.

Branded KeywordsBranded keywords are a type of navigational query in which users are searching for a particular site. These can be some of the website's most valuable keywords. Typically, it's very hard — for anyone outside of Google — to accurately know what a site's branded keywords actually are. Using some nifty computations in our database, here you'll find the highest volume keywords reflecting the site's brand. Cool, right? Top Search CompetitorsKnowing who your top search competitors are is important for any serious SEO competitive analysis. Sadly, most people simply guess. You may know who competes for your favorite keyword, but what happens when you rank for hundreds, thousands, or hundreds of thousands of keywords? Fortunately, we can comb through our vast database and make these calculations for you. Top Search Competitors shows you the competitors that compete for the same keywords as this domain, ranked by visibility. Top Questions"People Also Ask" have become a ubiquitous feature of Google search results, and represent a good starting point for keyword research and topic optimization. Top Questions shows questions mined from People Also Ask boxes for relevant keywords.

A few notes about the new Domain Analysis tool:

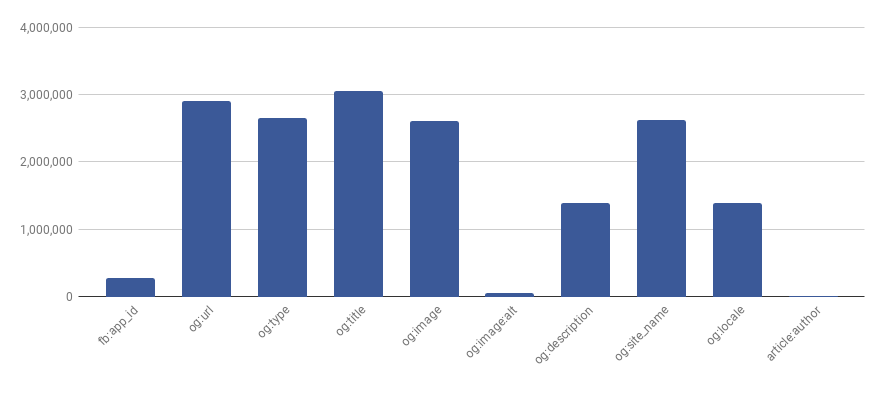

Also, we're looking for feedback! What do you think of the new Domain Analysis Tool? Let us know in the comments below. p.s. Big thanks to Casey Coates, our smart-as-heck dev who put much of this together. Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! via Blogger Announcing Quick, Free SEO Metrics with a New Domain Analysis Tool Posted by Catalin.Rosu Not long ago, my colleagues and I at Advanced Web Ranking came up with an HTML study based on about 8 million index pages gathered from the top twenty Google results for more than 30 million keywords. We wrote about the markup results and how the top twenty Google results pages implement them, then went even further and obtained HTML usage insights on them. What does this have to do with SEO?The way HTML is written dictates what users see and how search engines interpret web pages. A valid, well-formatted HTML page also reduces possible misinterpretation — of structured data, metadata, language, or encoding — by search engines. This is intended to be a technical SEO audit, something we wanted to do from the beginning: a breakdown of HTML usage and how the results relate to modern SEO techniques and best practices. In this article, we’re going to address things like meta tags that Google understands, JSON-LD structured data, language detection, headings usage, social links & meta distribution, AMP, and more. Meta tags that Google understandsWhen talking about the main search engines as traffic sources, sadly it's just Google and the rest, with Duckduckgo gaining traction lately and Bing almost nonexistent. Thus, in this section we’ll be focusing solely on the meta tags that Google listed in the Search Console Help Center.

<meta name="description" content="...">The meta description is a ~150 character snippet that summarizes a page's content. Search engines show the meta description in the search results when the searched phrase is contained in the description.

On the extremes, we found 685,341 meta elements with content shorter than 30 characters and 1,293,842 elements with the content text longer than 160 characters. <title>The title is technically not a meta tag, but it's used in conjunction with meta name="description". This is one of the two most important HTML tags when it comes to SEO. It's also a must according to W3C, meaning no page is valid with a missing title tag. Research suggests that if you keep your titles under a reasonable 60 characters then you can expect your titles to be rendered properly in the SERPs. In the past, there were signs that Google's search results title length was extended, but it wasn't a permanent change. Considering all the above, from the full 6,263,396 titles we found, 1,846,642 title tags appear to be too long (more than 60 characters) and 1,985,020 titles had lengths considered too short (under 30 characters).

A title being too short shouldn't be a problem —after all, it's a subjective thing depending on the website business. Meaning can be expressed with fewer words, but it's definitely a sign of wasted optimization opportunity.

Another interesting thing is that, among the sites ranking on page 1–2 of Google, 351,516 (~5% of the total 7.5M) are using the same text for the title and h1 on their index pages. Also, did you know that with HTML5 you only need to specify the HTML5 doctype and a title in order to have a perfectly valid page? <!DOCTYPE html> <title>red</title> <meta name="robots|googlebot">“These meta tags can control the behavior of search engine crawling and indexing. The robots meta tag applies to all search engines, while the "googlebot" meta tag is specific to Google.”

So the robots meta directives provide instructions to search engines on how to crawl and index a page's content. Leaving aside the googlebot meta count which is kind of low, we were curious to see the most frequent robots parameters, considering that a huge misconception is that you have to add a robots meta tag in your HTML’s head. Here’s the top 5:

<meta name="google" content="nositelinkssearchbox">“When users search for your site, Google Search results sometimes display a search box specific to your site, along with other direct links to your site. This meta tag tells Google not to show the sitelinks search box.”

Unsurprisingly, not many websites choose to explicitly tell Google not to show a sitelinks search box when their site appears in the search results. <meta name="google" content="notranslate">“This meta tag tells Google that you don't want us to provide a translation for this page.” - Meta tags that Google understands There may be situations where providing your content to a much larger group of users is not desired. Just as it says in the Google support answer above, this meta tag tells Google that you don't want them to provide a translation for this page.

<meta name="google-site-verification" content="...">“You can use this tag on the top-level page of your site to verify ownership for Search Console.”